WhatsApp itself is not primarily responsible for the violence, and shouldn’t be coerced into abridging the freedom of its users.

WhatsApp is doing a lot of things in response to the union government: tagging forwarded messages as such, testing “a lower limit of five chats at once”, removing “the quick forward button next to media messages”, and promoting digital literacy. It also plans to introduce its “fake news verification model” in India ahead of the coming general elections in 2019. It has also announced a public competition to identify solutions on how to counter the spread of mala fide information.

Yet, in the absence of any measures of its own to deter and punish violent lynch mobs, the government’s determination to move against one specific channel of communication could well end up as a wild goose chase.

Conceptually, laying all the blame at WhatsApp’s door is a lot like blaming bus service operators for allowing criminals to ride to the scene of the crime. Not that the bus operator has no role to play in preventing the crime; but that it is not solely or primarily the bus operator’s fault that criminals ride their buses to work.

Now, it is reasonable to argue that bus operators must report suspicious passengers and have a duty to prevent criminals activities if they become aware of it.

However, to give bus operators the power to require passengers to furnish their criminal records, or demand to know the purpose of the journey, or plant bugs under the seats to find out if passengers are planning a crime would be ghastly transgressions of liberty.

While it is reasonable for the government to require WhatsApp to act against the spread of mala fide information that results in violence, we must be clear that WhatsApp isn’t primarily responsible for the violence, and it shouldn’t be coerced into abridging the freedoms of its users. As I’ve written elsewhere, lynchings can be easily deterred if union and state governments take exemplary action to punish the culprits, and leaders use their political capital to speak out against this barbaric trend.

That’s one thing. But what is that WhatsApp can do?

Maybe some of the measures announced this week will prove to be effective. But here’s a simple thing that WhatsApp can do, and something that the ministry of electronics and information technology (MEITY) can require all social media platforms to implement. Like statutory warnings on cigarette packets and on some kinds of television programmes, insert customised contextual warnings in the users’ message streams.

The Economist often includes a notice in its classifieds section, alerting readers to make their own enquiries on the advertisements appearing on those pages. WhatsApp, Facebook, Twitter, Instagram and their like can do something similar and better. The disclaimers can be made to appear based on the users’ usage patterns, preferred language and media type.

As much as general digital literacy is necessary, a contextual ‘nudge’ asking the user to verify before believing, exercise discretion before forwarding and be mindful of applicable laws – at times, places, languages and media formats specific to the user – is likely to be more effective in preventing malicious rumours from spreading. Senders of frequent message forwards and their recipients can, for instance, receive more frequent and obtrusive disclaimers. The same can be done at places and times where there is a perceived risk of violence.

Since the social media platform needs only to use meta data it already has, it neither needs to know the content of its users’ messages nor indiscriminately restrict its features. Indeed, with the amount of data in their possessions, it is relatively easy for social media platforms to develop predictive models that can be used to identify high-risk situations and pre-empt them by pushing alert messages.

The best thing is that such messages promote overall good communications hygiene and are not merely useful in maintaining law and order.

Ideally, WhatsApp and other social media platforms could voluntarily implement such a feature; either on their own or jointly in the form of industry self-regulation. This is the best method because once the platforms are sensitised to the policy objectives, they will have both the incentive and the flexibility to innovate and use the best methods to address the problem. That is why the MEITY should use its soft, convening power to bring heads together and encourage them to evolve voluntary commitments.

If such an approach does not work, MEITY could require all social media platforms to insert regular, contextual statutory warnings. This is unlikely to be as effective as voluntary commitments (because it’ll become a mere compliance game) but will still be an improvement over the status quo.

The upshot is that it is possible to evolve a cooperative relationship between the government and private social media platforms to use technology to tackle a social problem. This will work to the extent the government’s intention to tackle it is credible. It would do well to look for solutions, not mere scapegoats.

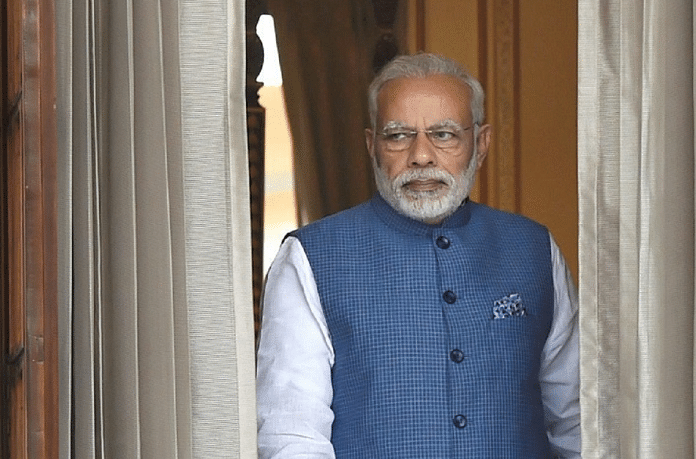

Nitin Pai is director of the Takshashila Institution, an independent centre for research and education in public policy.