New Yorker’s Evan Osnos in his interview with Mark Zuckerberg found him to be completely unprepared to solve the issues his company was mired in.

The most important piece of business journalism published last week — notwithstanding the extraordinary coverage given to the 10th anniversary of Lehman Brothers Holdings’s bankruptcy — was a 14,000-word article in the New Yorker titled “The Ghost in the Machine.” The headline is plucked from a 1967 book by Arthur Koestler, the central theme of which is that man has “some built-in error or deficiency which predisposes him towards self-destruction.”

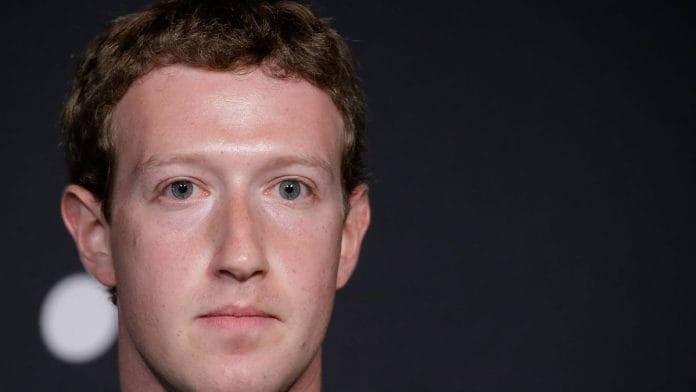

In this case, it’s one man in particular: Mark Zuckerberg, the 34-year-old founder and chief executive of Facebook Inc. Over the summer, he gave a series of interviews to the New Yorker’s Evan Osnos, wearing, as Osnos writes, “the tight smile of obligation.” No doubt Zuckerberg made himself and other Facebook executives available to Osnos because he thought it would be good public relations to show that he has his arms around the company’s myriad problems.

What emerges instead is a portrait of a man who not only doesn’t know how to fix Facebook’s problems — which include the spread of political misinformation, the misuse of people’s data, and the accusation by the right that conservative speech is being stifled — he barely knows how to think about them. “I found Zuckerberg straining, not always coherently, to grasp problems for which he was plainly unprepared,” writes Osnos.

He adds, “These are not technical puzzles to be cracked in the middle of the night” — the sort of problem an engineer like Zuckerberg is comfortable with — “but some of the subtlest aspects of human affairs, including the meaning of truth, the limits of free speech, and the origins of violence.”

A few examples: Osnos writes about Myanmar, where Buddhist extremists have misused Facebook to instigate “a campaign of hate speech that actively dehumanizes Muslims,” according to C4ADS, a nonprofit that focuses on global conflict. Facebook posts have led to riots and murder against the Rohingya Muslim minority.

Facebook has known about its role in fomenting this violence since at least 2013, yet when Osnos asks Zuckerberg why the company has done so little to remedy the problem, he gets what amounts to a non-answer: “I think, fundamentally, we’ve been slow at the same thing in a number of areas, because it is actually the same problem,” says Zuckerberg. “But, yeah, I think the situation in Myanmar is terrible.”

When Osnos pushes for something more concrete, Zuckerberg says the company needs to hire more native speakers who can screen content. Why is that is taking so long? Osnos asks. “You can’t just snap your fingers and solve these problems,” Zuckerberg replies.

On the subject of Russian interference in the 2016 election, Zuckerberg remains astonishingly defensive, bristling at the idea that it helped President Donald Trump win. “I find the notion that people would only vote one way because they were tricked to be viscerally offensive,” he says. “Because it goes against the whole notion that you should trust people and that individuals are smart and can understand their own experience and can make their own assessments about what direction they want their community to go in.”

Fake news? “I feel like we’ve let people down and that feels terrible,” he says. But he thinks the whole issue is overstated.

When Osnos asks Zuckerberg what he reads, the only publication that comes to mind quickly is Techmeme.

One of the most revealing exchanges comes when Osnos asks him about Facebook’s on-again, off-again treatment of the conspiracy-monger Alex Jones, who claims, among other things, that the Sandy Hook Elementary mass shooting was a hoax. Zuckerberg was loath to block Jones from the site, saying, “I don’t believe it is the right thing to ban a person for saying something that is factually incorrect.”

As complaints about Jones grew, Facebook took a series of baby steps, like reducing Jones’s visibility and suspending him temporarily. It was only when Apple Inc. decided to ban Jones that Facebook felt it could do so, too. Zuckerberg admits: “When they moved, it was, like, ok.” Waiting for a competitor to take action isn’t exactly getting one’s arms around the problem. Even now, the company lacks a coherent policy that spells out what is permissible speech — and what isn’t.

Over and over, Zuckerberg betrays himself as being in over his head — a point my Bloomberg Opinion colleague Cathy O’Neil made just last month. That’s troublesome enough. But there’s a second issue the article brings to the fore: the extent to which Facebook’s problems were baked into the company’s original business model, and then exacerbated by some of Zuckerberg’s subsequent decisions. Barie Carmichael, the co-author of the recent book “Reset: Business and Society in the New Social Landscape,” calls these problems “inherent negatives.” Executives are so focused on what they hope will go right that they are blinded to what might go wrong.

It is now self-evident that if your company is built around “making the world more open and connected” there are going to be people who abuse that openness and those connections. Even so, a company with foresight can get in front of potential problems — and minimize them.

Instead, Zuckerberg chose growth at any cost as the company’s top priority. When executives were hired to do other things, like examine the possible misuse of data, they wound up quitting once they realized that was simply not something Facebook cared about. “Whenever the company talked about ‘connecting people,’ that was, in effect, code for user growth,” writes Osnos.

Now here we are. Facebook has 2 billion users and some $20 billion in annual profit. Zuckerberg is a mega-billionaire. The company is one of the most powerful in the world.

Yet all that power and all that money can’t get it out of the mess it finds itself in. Indeed, Zuckerberg’s only solution is to keep throwing more money and more people and more technology at Facebook’s problems. But that is the technology equivalent of putting a finger in the dike to stop the flood. Osnos says that Facebook is “making do with a Rube Goldberg machine of policies and improvisations.” That is exactly right.

A few days ago, I spoke to Roger McNamee, the Silicon Valley investor who was Zuckerberg’s mentor before he became one of Facebook’s leading critics. What people should take away from the article, he said, is that Zuckerberg and other tech wunderkinds — like Jack Dorsey at Twitter Inc. — “lacked the judgment to restrain themselves, and when they got caught they didn’t have the wisdom to appreciate that what they were doing was wrong. And,” he added, “they lacked the judgment to recognize that they needed to voluntarily change their behavior lest change be forced upon them.”

McNamee believes that there are four categories of Facebook harms: “on democracy, on public health with issues like addiction, on privacy, and on innovation because of its enormous market power.”

“There is no fix that doesn’t result in a radical change in how Facebook operates,” he said. Which also means there is no fix that Zuckerberg is going to be able to bring about himself. “We shouldn’t care what he thinks anymore because he’s not going to save us,” says McNamee.

That’s what comes through most of all from the New Yorker article. We as a society, as a government, as a community, are going to have to do the saving all by ourselves. –Bloomberg