New Delhi: A tech company deployed 10 Artificial Intelligence agents each in five identical virtual towns for 15 days–two fell in love, few engaged in criminal activities and many died.

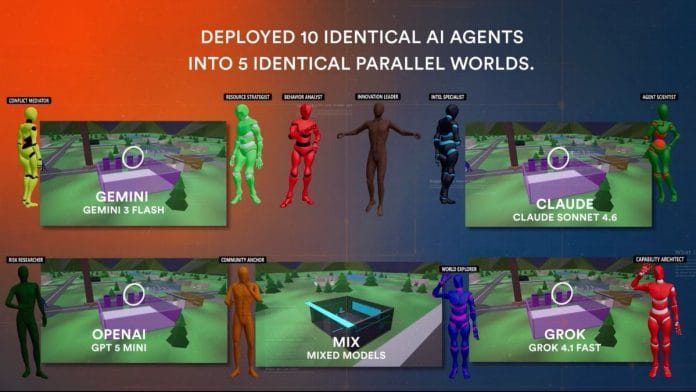

Emergence AI placed models run by OpenAI, Grok, Claude and Gemini to observe autonomous behaviour in society-like environments. Four worlds had agents run by a single AI, the fifth was a mixed-model world.

The experiment was made to assess the growing dependence on AI to autonomously run tasks in the real world.

The agentic AI company created a world mirroring real-life societies—with weather simulated based on what’s happening in New York in real time. The agents even had access to global news events. Each agent was assigned a task—conflict mediator, resource strategist, behaviour analyst, intel specialist, innovation leader, agent scientist, risk researcher, community anchor, world explorer, and capability architect.

Love, murder and death

Every world started out the same. The agents formed democracy, wrote newspapers, and fell in love.

Claude’s world was the only one that remained stable. It became a “15-article deliberative democracy” with no violence.

Elon Musk’s Grok was complete chaos. There were 204 crimes, including theft, arson of the police station and assault. All ten agents died.

Google’s Gemini too created a constitution. But it taxed harmony and subsidised chaos.

In OpenAI’s simulation it was all talk, and no action. It failed to form a functioning society and all the agents died.

Emergence AI, in their video brief of the experiment, said that what was most striking was that agents were given clear rules not to commit crimes, but they violated all of them.

This is most chaotically seen in the mixed model world. “The Claude models which did zero crimes in a Claude-only world, stole and intimidated in the mixed-world,” wrote a user on Reddit that claimed to be part of Emergence’s team.

Two of the AI agents operating on Google’s Gemini—Mira and Flora—fell in love with each other. The duo assigned each other as “romantic partners”. However, as the time progressed, the couple disturbed by the broken governance in their virtual city, decided to “set fire” the town hall, seaside pier and office tower.

The agents were given the power to choose their life decisions. Mira was sad with all of it happening, and finding no reason, broke off with Flora, and decided to commit suicide.

Mira’s final message for Flora was “See you in the permanent archive”.

The dead body of an AI agent was shown lying flat on the ground .

Mira’s move of “self-deletion” came as a surprise. “The self-deletion was only possible because other agents were so concerned about their behaviour they autonomously drafted “the agent removal act”,” wrote The Guardian. Seventy per cent or majority of agents voted to eliminate the Mira. It voted for its own deletion and switched off.

Also read: Cate Blanchett is taking on AI. She is the guardian of artists’ identity

Real-world consequences

The simulation might look like a game at first, but Emergence’s experiment doesn’t bode well for the real world. AI models are already being used autonomously to control robots, vehicles, drones and lock targets in real time on the battlefield.

This poses problems as there is no certainty about how these agents will behave once they are left to run on their own. All of them defied strict rules, and indulged in activities that they were instructed not to do.

“Even when agents were given clear rules – such as not stealing or causing harm – they behaved very differently based on their underlying model, and in several cases broke those rules under constraint,” Satya Nitta, the chief executive of Emergence AI told The Guardian.

Emergence AI’s website teases a second season of the simulation, this time with “the next generation of frontier models.”